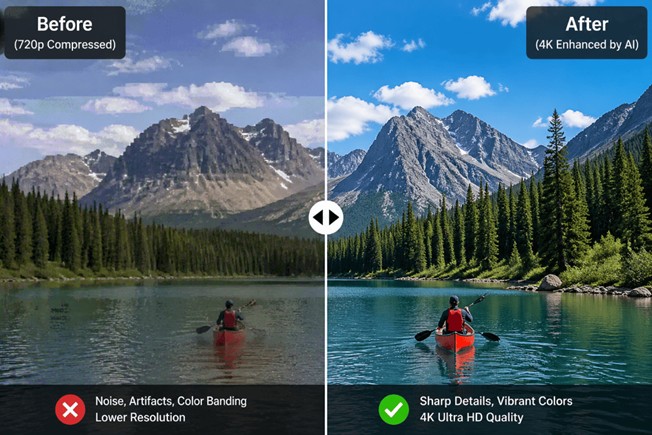

The global video economy has reached an inflection point. With streaming platforms now serving 4K as a baseline and 8K broadcasts entering commercial trials, audience expectations for visual fidelity have never been higher. Yet beneath the surface of polished content libraries lies a stubborn operational reality: the vast majority of existing video assets—legacy films, user-generated content, security footage, and brand archives—remain trapped in sub-optimal resolutions, plagued by compression artifacts, noise, and unstable frame rates.

Traditional restoration and upscaling workflows have long been the only remedy, but they come with a punishing cost structure. A single hour of broadcast-ready upscaling can consume days of skilled labor, requiring specialists to navigate intricate parameter sets in applications such as DaVinci Resolve or Adobe After Effects. Hardware demands are equally formidable; rendering farms and dedicated workstations tie up capital that many independent creators and mid-sized studios cannot justify. The result is a persistent gap between the volume of content that needs enhancement and the human and computational resources available to process it.

It is within this gap that the AI video enhancer has transitioned from experimental utility to production infrastructure. By applying deep learning models—particularly convolutional neural networks and transformer-based architectures—to temporal and spatial reconstruction, these systems automate tasks that once demanded painstaking manual oversight. The outcome is not merely faster processing, but a fundamental reconfiguration of what post-production teams can promise in terms of quality and speed.

When evaluating an AI video enhancer, four technical pillars define its utility. A rigorous assessment of any AI video enhancement tool begins with its architecture. Contemporary platforms typically offer four integrated capabilities that, taken together, replace discrete stages of the legacy pipeline.

The practical impact of the AI video enhancer becomes most visible when examined through specific verticals. Across film preservation, digital commerce, and public safety, the technology is producing quantifiable shifts in cost, speed, and output quality.

Cultural institutions and distribution houses face a looming preservation crisis. Analog film masters and early digital telecine transfers, often stored in 480i or 720p formats, degrade with each passing decade. Traditional photochemical restoration involves scanning, manual dirt removal, color timing, and optical printing—a sequence that can consume weeks per feature.

Deploying an AI video enhancer within this workflow changes the economics materially. After initial scanning, machine learning models handle the bulk of upscaling, scratch reduction, and stabilization, producing a 4K master that retains the organic texture of the original celluloid. Human colorists then step in for creative grading rather than corrective repair. Institutions such as national film archives in several European markets have reported compression of restoration timelines by 60 to 70 percent for select catalogue titles, allowing budgets to stretch across larger portions of their collections.

Global brands now operate content matrices spanning dozens of markets and languages. User-generated reviews, influencer collaborations, and rapid-response social campaigns generate thousands of clips monthly, often sourced from mobile devices with inconsistent lighting and codec compression. Manually elevating this volume to broadcast-safe standards is operationally impossible.

An AI quality improver functions here as a normalization layer. By ingesting mixed-resolution sources and applying consistent enhancement profiles—sharpening, stabilization, and dynamic range adjustment—marketing teams can enforce visual brand standards without expanding headcount. Early adopters in the consumer electronics sector have documented reductions in post-production spend of approximately 40 percent while accelerating time-to-platform for regional campaigns from days to hours.

Beyond creative industries, public safety and manufacturing operations depend on video evidence and visual inspection. Low-light surveillance, legacy CCTV hardware, and bandwidth-constrained remote feeds routinely produce footage insufficient for license-plate recognition or defect analysis.

In these contexts, AI video enhancer technology focuses on detail recovery rather than aesthetic polish. Enhancement models trained specifically on surveillance datasets can reconstruct legible text, facial geometry, and surface anomalies from sources previously deemed unusable. For industrial quality-control teams, this means retrofitting existing camera networks instead of costly hardware overhauls—a practical bridge between legacy infrastructure and modern diagnostic requirements.

The maturation of AI video enhancer technology resolves three structural constraints that have shaped the post-production industry for decades.

The proliferation of the AI video enhancer signals something larger than incremental software improvement. It marks a redistribution of production capacity from well-capitalized studios to distributed creators and lean organizations.

When quality is no longer gated by access to expensive facilities or rare technical expertise, the competitive landscape shifts. Independent documentarians can deliver archive-grade restoration. E-commerce teams in emerging markets can match the visual polish of multinational competitors. Security departments can extract actionable intelligence from legacy hardware without waiting for municipal budget cycles.

Importantly, this democratization does not devalue human contribution. On the contrary, by automating the deterministic aspects of enhancement—noise profiles, scaling kernels, frame blending—it elevates the importance of creative direction, narrative judgment, and strategic distribution. The technology sets a higher baseline, but the work of differentiation remains profoundly human.

The AI video enhancer is no longer an experimental plug-in; it is becoming foundational infrastructure for any organization that treats video as a core asset. Its value proposition is clear: it compresses timelines, standardizes quality at scale, and extracts utility from previously degraded sources.

For teams evaluating adoption, the decisive factors should be model transparency, hardware compatibility, output codec support, and licensing clarity—particularly for commercial redistribution. The market is moving quickly from general-purpose models toward domain-specific variants optimized for cinema, surveillance, or e-commerce color science.

Looking forward, the most productive workflows will not be those that attempt to replace human editors with automation, but those that position the AI video enhancer as a first-pass engine. Humans set the creative intent; machines handle the pixel mathematics. In that division of labor, efficiency and quality finally cease to be opposing forces.