For a long time, AI video felt like a preview of a future workflow rather than a workflow itself. The outputs were often exciting, but the surrounding process was fragmented. One tool handled images, another handled motion, another handled audio, and another was needed for comparison or cleanup. As a result, even good results could feel operationally weak. What makes Seedance 2.0 interesting is that it points to a different stage of maturity, where AI video is no longer only about generating clips but about fitting into an actual production process.

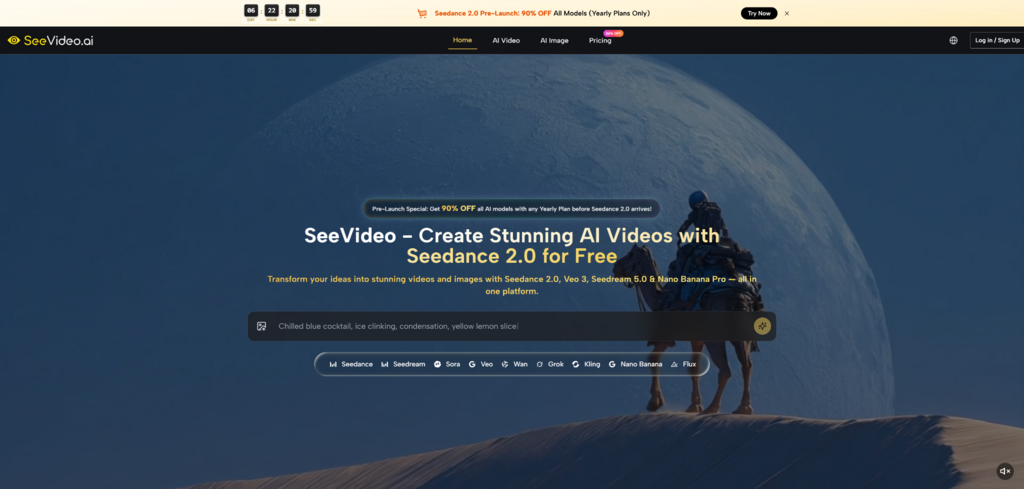

The page I reviewed presents Seedance 2.0 as the core engine inside a broader AI creation environment. That matters because the surrounding system changes the value of the model itself. Seedance 2.0 is described as supporting text, image, and audio inputs while emphasizing fast multi-scene generation and professional-quality output. But what really gives those claims weight is the platform context: other video and image models are available in the same workspace, results can be compared, and the overall structure is designed for creators who need to move from idea to output without constantly leaving the environment.

A tool feels operational when it reduces friction across the entire creative chain, not just in the moment of generation. In video work, that means several things at once. The input has to be flexible enough to match how ideas actually arrive. The generation has to be fast enough to support iteration. The output has to be good enough to justify reuse. And the surrounding workflow has to help people evaluate results instead of trapping them inside one model’s limitations.

Seedance 2.0 appears to matter because it sits near the center of that chain. It is not just the thing that creates motion. It is part of a system that allows creators to choose, compare, refine, and continue.

A production-friendly tool cannot live on visual flair alone. In real use, the value of Seedance 2.0 AI Video often rises or falls based on whether it can support a sequence rather than a single visual event. Multi-scene generation matters because production work is built from progression.

A teaser moves from setup to reveal. A brand clip moves from context to product. A short narrative moves from one emotional beat to another. Seedance 2.0 is presented around that idea of connected generation, and that is one of the clearest reasons it feels more operational than many earlier tools.

Fast generation is useful, and the page emphasizes speed as part of the experience. But in practical work, continuity often saves more time than speed alone. A quick result that needs heavy correction is less valuable than a slightly more structured result that already respects the flow of the concept.

In that sense, multi-scene capability is not only a creative feature. It is also an efficiency feature. It reduces the gap between generated footage and usable footage.

This is not only relevant to filmmakers. Social media teams, ecommerce brands, agencies, and solo creators all benefit from better structure. Many do not need complex storytelling, but they do need outputs that feel intentional and complete. Even a simple ad becomes stronger when the sequence feels planned.

The site suggests a workflow that is simple on the surface but strategically useful.

The first decision is the project’s starting material. Text-to-video is useful when the creator is still exploring and wants the model to interpret an idea from language. Image-to-video is more suitable when there is already a visual reference that should remain central to the result.

At the model stage, Seedance 2.0 becomes the more logical choice when the project needs broader input support and a stronger sense of sequence. The platform makes clear that not every model serves the same role, which helps keep selection grounded.

The next part is where users input text, upload images, or guide the process with audio. The key point is that control can come from more than one source. That is useful because professional work often depends on reference materials rather than pure imagination.

The final stage is not simply export. It is evaluation. The platform encourages users to compare outputs from different models and choose the one that best fits the brief. This is one of the strongest signs that the product is designed for operational use rather than demo appeal.

A model becomes more useful when it does not need to solve every problem by itself. On the platform, Seedance 2.0 sits alongside image tools like Seedream and Nano Banana and video options like Veo 3, Sora 2, Kling, and Seedance 1.5. That creates a broader stack rather than a single-track pipeline.

One practical benefit is that image generation and video generation can exist close together. A creator can develop a strong visual reference first and then use that as the foundation for motion. That is a more stable workflow than asking a single text prompt to invent everything at once.

Another benefit is that creators can compare results across models instead of forcing every concept through one engine. This reduces both wasted effort and false certainty. In my observation, strong AI workflows are rarely built on faith in one model. They are built on knowing which tool to test first and which alternatives to evaluate next.

Context switching is a hidden cost in creative work. Every time a team moves between separate subscriptions, interfaces, and asset libraries, friction builds. A unified environment does not remove all complexity, but it can make the process feel more continuous.

This is where Seedance 2.0 starts to feel less like a model announcement and more like a workflow answer. It supports a way of working, not just a way of generating.

Any honest evaluation should mention that AI video still requires iteration. Results depend heavily on prompt clarity, reference quality, and the user’s patience during refinement. Multi-scene support is valuable, but it does not guarantee that every sequence will be perfect on the first attempt. Audio-guided generation expands control, but it still benefits from thoughtful inputs and realistic expectations.

That is not a flaw unique to this product. It is simply the current nature of generative video systems. The stronger approach is to use the tool where its structure offers real leverage rather than expecting it to solve everything automatically.

What stands out most about Seedance 2.0 is not any single claim on the page. It is the combination of capabilities and context. Multi-scene generation matters. Audio input matters. Text and image support matter. But together, inside a platform built for comparison and reuse, they point to something larger: AI video is becoming operational.

That is a meaningful shift. It moves the conversation away from spectacle and toward usefulness. It suggests that creators may spend less time asking whether AI video is possible and more time deciding how to fit it into actual production thinking. Seedance 2.0, in that sense, is not only a video model. It is part of a more mature answer to how AI creation can work when the goal is not just to impress, but to produce.