Most visual bottlenecks do not begin with a lack of ideas. They begin when a good image needs to become several useful assets at once. A launch visual may need cleanup for a landing page, a sharper version for ads, a different style for social media, and a motion variant for short-form content. In that kind of workflow, an AI Photo Editor becomes less about novelty and more about output efficiency. It helps one image travel further without forcing the user to rebuild the work from scratch each time.

That matters because the modern content cycle is unusually demanding. Teams are expected to publish more often, adapt faster, and test more variations than before. Yet many still rely on editing processes designed for slower, more manual production. The result is familiar: a great concept gets trapped inside a slow workflow. What makes a platform like this relevant in 2026 is that it treats editing not as a standalone technical task, but as part of a larger asset system where one source image may need to serve many formats and objectives.

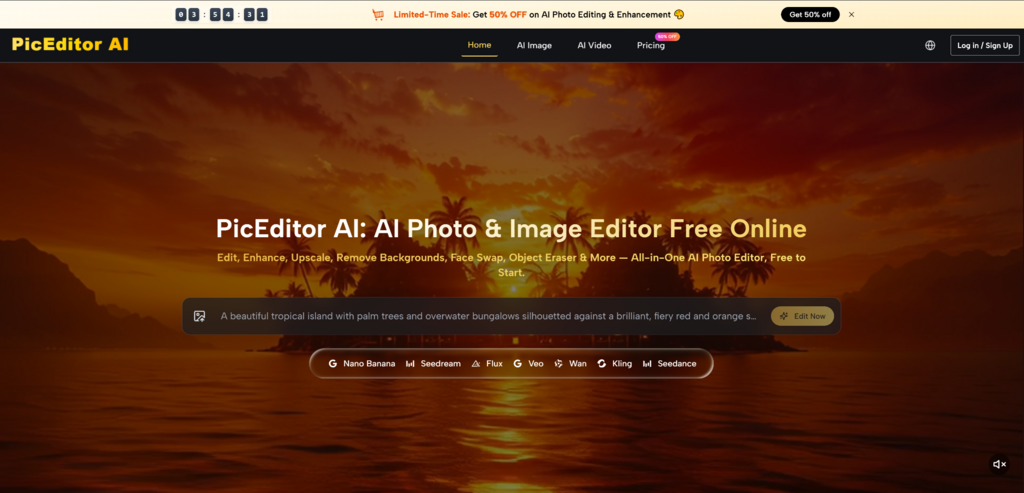

In my view, that is the stronger way to understand the product. It is not simply a tool for correcting photos. It is a workspace for extending the useful life of visual material. Enhancement, upscaling, object removal, style change, face swap, and image-to-video generation all support that same larger goal: helping users get more usable output from the same starting point.

Why One Image Now Needs Multiple Outcomes

A few years ago, a finished image often stayed in one lane. Today that is much less common. A product photo may appear in a storefront banner, a paid ad, a short video, a social post, and an email campaign within the same week. Even when the core visual idea remains the same, the surrounding format keeps changing.

This is why editing tools are increasingly judged by flexibility rather than by isolated features alone. The question is no longer just whether a platform can improve an image. The better question is whether it can help a team repurpose, adapt, and expand that image across multiple needs without introducing too much friction.

The larger the content surface area becomes, the more painful manual production feels. Traditional editing software is still valuable, especially for detailed retouching, but it can become slow when teams need quick turnarounds instead of deep craft on every single asset.

An AI Image Editor fits this new reality because it shortens the distance between decision and output. Users can move from source image to adjusted image through a more direct loop: upload, choose the type of change, describe the result, and review what comes back. That kind of workflow feels especially relevant when the same visual idea needs several adaptations in a short time.

This is not only about convenience. It is also about resource efficiency. If one image can support a sharper version, a cleaned-up version, a restyled version, and a motion variation, then the value of that original asset increases. That is a meaningful advantage for smaller teams, creators, and marketers who need more output without expanding production overhead at the same pace.

One of the more interesting parts of the platform is that it does not present image editing as one generic capability. It separates work by model and output type, which makes the system feel more like a flexible environment than a single-purpose editor.

The site highlights image models such as GPT-4o, Nano Banana, Nano Banana 2, Flux Kontext Pro, Flux Kontext Max, Seedream 4.0, Seedream 5.0 Lite, Qwen Image Edit, and Grok Imagine Image. It also includes video models such as Veo 3, Veo 3.1 Basic, Veo 3.1 Premium, Kling 2.5, Kling 2.1 Pro, Kling 2.1 Master, Seedance 1.0 Lite, Seedance 1.0 Pro, Seedance 1.5 Pro, Wan 2.5, Runway Gen 4, and Grok Imagine Video.

That range matters because different jobs require different strengths. Some edits need realism. Some need precise contextual control. Some benefit more from speed than from depth.

The useful idea here is not that more models automatically mean better results. It is that the user has a better chance of matching the right engine to the right task.

Nano Banana is presented as strong for realism, style transfer, and character consistency. The platform also notes support for up to four reference images, which is especially useful when the user wants to preserve identity or maintain a stable visual look across several outputs. In practical terms, that matters for portraits, character-based content, branded visuals, and repeatable campaigns.

Flux appears more aligned with context-aware editing and targeted adjustments, including text replacement inside images and object-level edits. That makes it better suited for situations where the user is not asking for a broad reinvention, but for a controlled correction inside an existing composition.

Seedream seems positioned for speed. That becomes important when a team wants to test several visual directions quickly instead of treating one result as final. In many real content workflows, the fastest useful version is more valuable than the theoretically perfect version that arrives too late.

The platform’s process is straightforward, which is one reason it feels accessible even when it includes many models underneath.

The workflow begins with an uploaded image. This detail matters because the product is not only about generating visuals from scratch. It is also about transforming images that already contain framing, subject placement, tone, and visual intent.

Once the image is uploaded, the user chooses the type of modification or the model that fits the task. This can include more familiar editing actions such as enhancement or object removal, but it can also mean a model-driven transformation when the goal is stylistic or generative.

The user then describes what should happen to the image. According to the site, the platform analyzes the picture and applies the edit based on that instruction. This step is crucial because the quality of direction strongly shapes the result. In my experience with tools in this category, the clearer the instruction, the more reliable the output tends to feel.

After processing, the user reviews the output and decides whether another pass is needed. That is worth saying plainly because it reflects the real nature of AI-assisted editing.

Sometimes the first generation is strong enough to use. Sometimes a second or third pass creates a noticeably better result. That does not weaken the platform’s value. In many cases, repeating a generation is still much faster than building a comparable edit through a fully manual workflow.

The platform becomes easier to evaluate when viewed through common production needs rather than abstract claims.

This table shows why the product is more than a casual image toy. It sits closer to a production utility, especially for people who work with the same asset across multiple channels.

The image-to-video side of the platform is more important than it may look at first. Once a still image can also become a motion asset, the meaning of editing expands. The goal is no longer only to perfect a picture. It is to prepare that picture for wider use.

That is especially relevant for content teams. A polished visual can become a motion experiment without requiring a separate creative stack from the start. For social media, product marketing, and short-form storytelling, that kind of transition can be very efficient.

A team with approved imagery often does not want to restart creative direction just to test movement. The ability to animate an existing asset means the original visual work can continue delivering value in new formats.

This may be the most practical insight behind the platform. The better the tool becomes at extending one image into several useful outputs, the more valuable each approved asset becomes.

AI Image Editor lowers the barrier to editing, but it does not remove the need for judgment. Source image quality still matters. Prompt clarity still matters. Model choice still matters. Users expecting frame-by-frame manual control may still find some results less exact than traditional software.

There is also a clear usage ladder in the pricing structure. The site positions the service as free to start, while paid tiers add advantages such as broader credit access, no watermark, private generation, commercial license support, priority processing, and higher capacity. That seems reasonable for a system meant to support both casual experimentation and heavier production use.

What makes the platform timely is not only that it edits images quickly. It is that it reflects how visual work now happens. Teams do not just need better pictures. They need adaptable assets, faster iterations, and simpler paths from idea to output.

That is why this kind of product feels especially relevant in 2026. It treats image editing as a reusable workflow rather than a single isolated action. Start with one visual, choose the right path, describe the intended change, and keep refining until the result is usable across more than one context. For many modern teams, that is a much more valuable promise than editing power alone.